【全网首发】一次druid数据库连接池连接泄露的排查分析原创

最近项目组某应用将数据库由Oracle切换到了TBase,遇到了数据库连接泄露导致无法创建新连接的问题,下面是问题的分析过程。

问题现象

应用侧异常日志

为了便于阅读,去掉了线程栈中不相关的栈帧。

com.alibaba.druid.pool.GetConnectionTimeoutException: wait millis 5000, active 0, maxActive 30, creating 0, createErrorCount 13047

at com.alibaba.druid.pool.DruidDataSource.getConnectionInternal(DruidDataSource.java:1773)

at com.alibaba.druid.pool.DruidDataSource.getConnectionDirect(DruidDataSource.java:1427)

at com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:5059)

at com.alibaba.druid.filter.logging.LogFilter.dataSource_getConnection(LogFilter.java:917)

at com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:5055)

at com.alibaba.druid.filter.stat.StatFilter.dataSource_getConnection(StatFilter.java:726)

at com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:5055)

at com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:1405)

at com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:1397)

at com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:100)

at org.springframework.jdbc.datasource.DataSourceTransactionManager.doBegin(DataSourceTransactionManager.java:204)

at org.springframework.transaction.support.AbstractPlatformTransactionManager.getTransaction(AbstractPlatformTransactionManager.java:373)

at org.springframework.transaction.interceptor.TransactionAspectSupport.createTransactionIfNecessary(TransactionAspectSupport.java:430)

at org.springframework.transaction.interceptor.TransactionAspectSupport.invokeWithinTransaction(TransactionAspectSupport.java:276)

at org.springframework.transaction.interceptor.TransactionInterceptor.invoke(TransactionInterceptor.java:96)

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:179)

at org.springframework.aop.framework.JdkDynamicAopProxy.invoke(JdkDynamicAopProxy.java:213)

... ...//省略的栈帧

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745) Caused by: org.postgresql.util.PSQLException: FATAL: remaining connection slots are reserved for non-replication superuser connections

at org.postgresql.core.v3.ConnectionFactoryImpl.doAuthentication(ConnectionFactoryImpl.java:693)

at org.postgresql.core.v3.ConnectionFactoryImpl.tryConnect(ConnectionFactoryImpl.java:203)

at org.postgresql.core.v3.ConnectionFactoryImpl.openConnectionImpl(ConnectionFactoryImpl.java:258)

at org.postgresql.core.ConnectionFactory.openConnection(ConnectionFactory.java:54)

at org.postgresql.jdbc.PgConnection.<init>(PgConnection.java:263)

at org.postgresql.Driver.makeConnection(Driver.java:443)

at org.postgresql.Driver.connect(Driver.java:297)

at com.alibaba.druid.filter.FilterChainImpl.connection_connect(FilterChainImpl.java:156)

at com.alibaba.druid.filter.FilterAdapter.connection_connect(FilterAdapter.java:787)

at com.alibaba.druid.filter.FilterEventAdapter.connection_connect(FilterEventAdapter.java:38)

at com.alibaba.druid.filter.FilterChainImpl.connection_connect(FilterChainImpl.java:150)

at com.alibaba.druid.filter.stat.StatFilter.connection_connect(StatFilter.java:251)

at com.alibaba.druid.filter.FilterChainImpl.connection_connect(FilterChainImpl.java:150)

at com.alibaba.druid.pool.DruidAbstractDataSource.createPhysicalConnection(DruidAbstractDataSource.java:1659)

at com.alibaba.druid.pool.DruidAbstractDataSource.createPhysicalConnection(DruidAbstractDataSource.java:1723)

at com.alibaba.druid.pool.DruidDataSource$CreateConnectionThread.run(DruidDataSource.java:2838)

从上面线程栈可知,org.postgresql.util.PSQLException导致了com.alibaba.druid.pool.GetConnectionTimeoutException,所以org.postgresql.util.PSQLException是根因:FATAL: remaining connection slots are reserved for non-replication superuser connections,该异常表示TBase Server端该用户的连接数达到了阈值。接下来看一下TBase服务端连接数的情况。

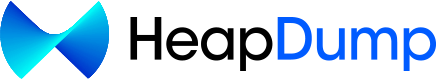

TBase服务端连接数

从上图可知

- 由于达到了TBase Server端连接数2000的阈值,所以导致了应用无法创建连接的异常;

- 随着应用运行,连接数在慢慢增加,很明显存在连接泄露的问题。

基本信息

应用使用的druid连接池,版本是_druid-1.2.8;TBase JDBC Driver是postgresql-42.6.0;TBase Server版本是10.0 @ Tbase_v5.06.4.3 (commit: 6bd7f61dc)_。

JDK版本:

java version "1.8.0_65"

Java(TM) SE Runtime Environment (build 1.8.0_65-b17)

Java HotSpot(TM) 64-Bit Server VM (build 25.65-b01, mixed mode)

druid配置

druid配置包括两部分,分别是:Filter、DataSource。

Filter

<bean id="filter-log4j2" class="com.alibaba.druid.filter.logging.Log4j2Filter">

<property name="connectionLogEnabled" value="true" />

<property name="statementLogEnabled" value="true" />

<property name="statementExecutableSqlLogEnable" value="true" />

<property name="resultSetLogEnabled" value="false" />

</bean>

DataSource

<bean id="dataSource6" class="com.alibaba.druid.pool.DruidDataSource" init-method="init" destroy-method="close">

<property name="driverClassName" value="org.postgresql.Driver" />

<property name="url" value="${db.tbase.url}" />

<property name="username" value="${db.tbase.username}" />

<property name="password" value="${db.tabse.secpwd}" />

<property name="initialSize" value="2" />

<property name="minIdle" value="5" />

<property name="maxActive" value="30" />

<property name="maxWait" value="5000" />

<property name="timeBetweenEvictionRunsMillis" value="60000" />

<property name="minEvictableIdleTimeMillis" value="300000" />

<property name="testWhileIdle" value="true" />

<property name="testOnBorrow" value="false" />

<property name="testOnReturn" value="false" />

<property name="phyTimeoutMillis" value="1800000" />

<property name="poolPreparedStatements" value="false" />

<property name="maxPoolPreparedStatementPerConnectionSize" value="20" />

<!-- 对泄漏的连接 自动关闭 -->

<!-- 打开removeAbandoned功能 -->

<property name="removeAbandoned" value="true" />

<!-- 60秒,也就是1分钟 -->

<property name="removeAbandonedTimeout" value="60" />

<!-- 关闭abanded连接时输出错误日志 -->

<property name="logAbandoned" value="true" />

<!-- 配置监控统计拦截的filters -->

<property name="filters" value="mergeStat" />

<property name="proxyFilters">

<list>

<ref bean="filter-log4j2" />

</list>

</property>

<property name="connectionProperties" value="druid.stat.slowSqlMillis=5000" />

</bean>

问题分析

removeAbandoned

数据库连接泄露,首先想到的是应用程序从数据库连接池获取到连接,使用完之后是否没有释放?

从上面的异常信息看是不存在这种情况的,因为active是0(即当前正在使用的连接数是0),为了便于理解,下面分析下druid这块的逻辑。

从上面可知,druid removeAbandoned相关配置为:

<!-- 对泄漏的连接 自动关闭 -->

<!-- 打开removeAbandoned功能 -->

<property name="removeAbandoned" value="true" />

<!-- 60秒,也就是1分钟 -->

<property name="removeAbandonedTimeout" value="60" />

<!-- 关闭abanded连接时输出错误日志 -->

<property name="logAbandoned" value="true" />

申请连接

以下代码在DruidDataSource类的getConnectionDirect方法中。

DruidPooledConnection poolableConnection ;

... ...

if (removeAbandoned) {

StackTraceElement[] stackTrace = Thread.currentThread().getStackTrace();

poolableConnection.connectStackTrace = stackTrace;

poolableConnection.setConnectedTimeNano();

poolableConnection.traceEnable = true;

activeConnectionLock.lock();

try {

activeConnections.put(poolableConnection, PRESENT);

} finally {

activeConnectionLock.unlock();

}

}

释放连接

以下代码在DruidDataSource类的recycle方法中,该方法是在应用程序将连接归还给连接池的时候调用。

DruidPooledConnection poolableConnection ;

... ...

if (pooledConnection.traceEnable) {

Object oldInfo = null;

activeConnectionLock.lock();

try {

if (pooledConnection.traceEnable) {

oldInfo = activeConnections.remove(pooledConnection);

pooledConnection.traceEnable = false;

}

} finally {

activeConnectionLock.unlock();

}

if (oldInfo == null) {

if (LOG.isWarnEnabled()) {

LOG.warn("remove abandonded failed. activeConnections.size " + activeConnections.size());

}

}

}

如果应用程序没有释放连接,activeConnections会有未释放连接的引用。

释放泄露的连接

DruidDataSource中有个Druid-ConnectionPool-Destroy-XX的线程负责释放连接:

protected void createAndStartDestroyThread() {

destroyTask = new DestroyTask();

if (destroyScheduler != null) {

long period = timeBetweenEvictionRunsMillis;

if (period <= 0) {

period = 1000;

}

destroySchedulerFuture = destroyScheduler.scheduleAtFixedRate(destroyTask, period, period,

TimeUnit.MILLISECONDS);

initedLatch.countDown();

return;

}

String threadName = "Druid-ConnectionPool-Destroy-" + System.identityHashCode(this);

destroyConnectionThread = new DestroyConnectionThread(threadName);

destroyConnectionThread.start();

}

因为destroyScheduler是null,所以使用的是DestroyConnectionThread,每隔timeBetweenEvictionRunsMillis执行一次释放任务。

public class DestroyConnectionThread extends Thread {

public DestroyConnectionThread(String name){

super(name);

this.setDaemon(true);

}

public void run() {

initedLatch.countDown();

for (;;) {

// 从前面开始删除

try {

if (closed || closing) {

break;

}

if (timeBetweenEvictionRunsMillis > 0) {

Thread.sleep(timeBetweenEvictionRunsMillis);

} else {

Thread.sleep(1000); //

}

if (Thread.interrupted()) {

break;

}

destroyTask.run();

} catch (InterruptedException e) {

break;

}

}

}

}

public class DestroyTask implements Runnable {

public DestroyTask() {

}

@Override

public void run() {

shrink(true, keepAlive);

if (isRemoveAbandoned()) {

removeAbandoned();

}

}

}

public int removeAbandoned() {

int removeCount = 0;

long currrentNanos = System.nanoTime();

List<DruidPooledConnection> abandonedList = new ArrayList<DruidPooledConnection>();

activeConnectionLock.lock();

try {

Iterator<DruidPooledConnection> iter = activeConnections.keySet().iterator();

for (; iter.hasNext();) {

DruidPooledConnection pooledConnection = iter.next();

if (pooledConnection.isRunning()) {

continue;

}

long timeMillis = (currrentNanos - pooledConnection.getConnectedTimeNano()) / (1000 * 1000);

if (timeMillis >= removeAbandonedTimeoutMillis) {

iter.remove();

pooledConnection.setTraceEnable(false);

abandonedList.add(pooledConnection);

}

}

} finally {

activeConnectionLock.unlock();

}

if (abandonedList.size() > 0) {

for (DruidPooledConnection pooledConnection : abandonedList) {

final ReentrantLock lock = pooledConnection.lock;

lock.lock();

try {

if (pooledConnection.isDisable()) {

continue;

}

} finally {

lock.unlock();

}

//释放连接

JdbcUtils.close(pooledConnection);

pooledConnection.abandond();

removeAbandonedCount++;

removeCount++;

//打印日志

if (isLogAbandoned()) {

StringBuilder buf = new StringBuilder();

buf.append("abandon connection, owner thread: ");

buf.append(pooledConnection.getOwnerThread().getName());

buf.append(", connected at : ");

buf.append(pooledConnection.getConnectedTimeMillis());

buf.append(", open stackTrace\n");

StackTraceElement[] trace = pooledConnection.getConnectStackTrace();

for (int i = 0; i < trace.length; i++) {

buf.append("\tat ");

buf.append(trace[i].toString());

buf.append("\n");

}

buf.append("ownerThread current state is " + pooledConnection.getOwnerThread().getState()

+ ", current stackTrace\n");

trace = pooledConnection.getOwnerThread().getStackTrace();

for (int i = 0; i < trace.length; i++) {

buf.append("\tat ");

buf.append(trace[i].toString());

buf.append("\n");

}

LOG.error(buf.toString());

}

}

}

return removeCount;

}

从以上分析可知,即使应用没有释放连接,Druid-ConnectionPool-Destroy线程也会释放掉activeConnections中的连接(除非业务线程一直阻塞,连接处于running==true的状态,通过分析线程栈不存在这种情况)。

泄露的连接不是通过连接池申请的?

另一个可能的原因是泄露的连接不是通过连接池申请的,而是应用自己创建出来,但是没有执行关闭连接的操作。所以思路是拿到所有创建连接的线程栈,然后通过分析线程栈找到有哪些地方创建的连接,这里我们使用arthas的watch来完成。

//抓取PgConnection本地端口号和线程栈

watch org.postgresql.core.v3.ConnectionFactoryImpl openConnectionImpl "{returnObj.pgStream.connection.impl.localport,@org.apache.commons.lang3.exception.ExceptionUtils@getStackTrace(new java.lang.Exception())}" -n 99999999 > /xxx/xxx/xxx/xxx.txt &

TBase的数据库连接对象是org.postgresql.jdbc.PgConnection,在PgConnection初始化的时候会调用org.postgresql.core.v3.ConnectionFactoryImpl.openConnectionImpl。

生成的文件中记录了每个数据库连接的【本地端口号】和【线程栈】,通过对线程栈进行分析,发现数据库连接都是通过连接池创建出来的。下面是创建数据库连接的线程栈:

at org.postgresql.core.v3.ConnectionFactoryImpl.openConnectionImpl(ConnectionFactoryImpl.java:330)

at org.postgresql.core.ConnectionFactory.openConnection(ConnectionFactory.java:54)

at org.postgresql.jdbc.PgConnection.<init>(PgConnection.java:263)

at org.postgresql.Driver.makeConnection(Driver.java:443)

at org.postgresql.Driver.connect(Driver.java:297)

at com.alibaba.druid.filter.FilterChainImpl.connection_connect(FilterChainImpl.java:156)

at com.alibaba.druid.filter.FilterAdapter.connection_connect(FilterAdapter.java:787)

at com.alibaba.druid.filter.FilterEventAdapter.connection_connect(FilterEventAdapter.java:38)

at com.alibaba.druid.filter.FilterChainImpl.connection_connect(FilterChainImpl.java:150)

at com.alibaba.druid.filter.stat.StatFilter.connection_connect(StatFilter.java:251)

at com.alibaba.druid.filter.FilterChainImpl.connection_connect(FilterChainImpl.java:150)

at com.alibaba.druid.pool.DruidAbstractDataSource.createPhysicalConnection(DruidAbstractDataSource.java:1659)

at com.alibaba.druid.pool.DruidAbstractDataSource.createPhysicalConnection(DruidAbstractDataSource.java:1723)

at com.alibaba.druid.pool.DruidDataSource$CreateConnectionThread.run(DruidDataSource.java:2838)

既然数据库连接都是通过连接池创建出来的,那么数据库连接应该都是受到连接池管理的。

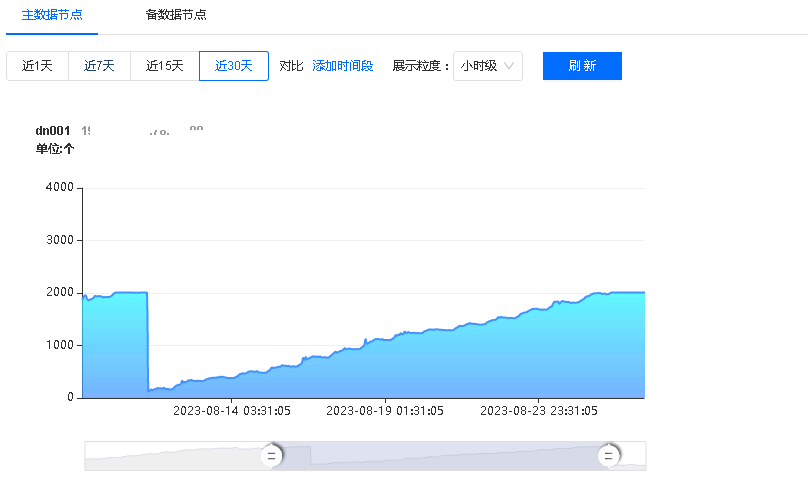

数据库连接池连接与netstat看到的不一致

既然连接都是连接池管理的,那么分析下连接池中的连接信息:

连接池中连接信息

//查看连接池信息

vmtool --action getInstances --className com.alibaba.druid.pool.DruidDataSource --express 'instances.{? #this.dbTypeName=="postgresql"}.{#this}'

//查看连接池中连接本地端口号

vmtool --action getInstances --className com.alibaba.druid.pool.DruidDataSource --express 'instances.{? #this.dbTypeName=="postgresql"}.{#this.connections[0].conn.connection.getQueryExecutor().pgStream.connection.impl.localport}' -x 1

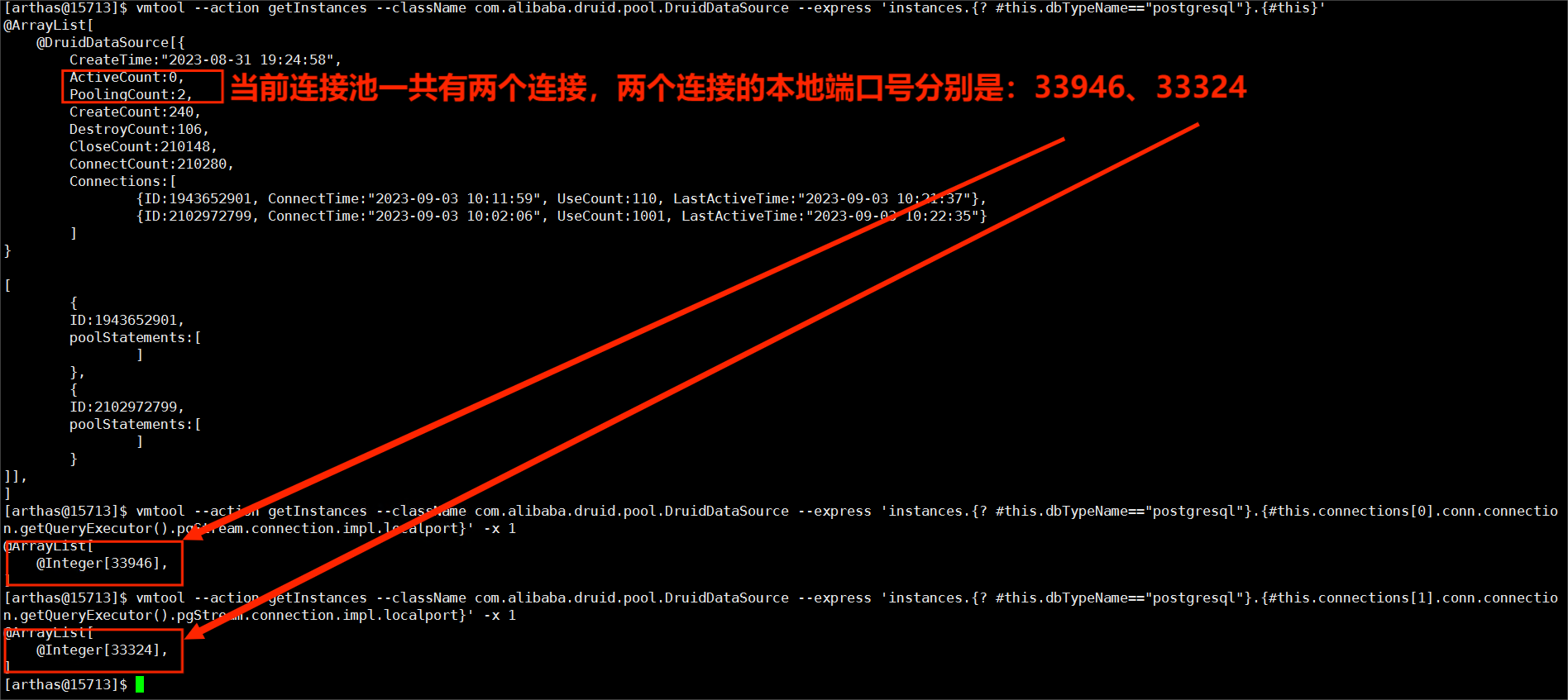

netstat连接信息

netstat共查出8个连接,其中只有33946和33324是在连接池中的,其他6个都不在连接池中。

分析到这里,可以确定存在连接泄露,即有连接没有被关闭的情况,那么哪些地方进行了连接关闭呢?

连接关闭的路径有哪些?

上面已经抓取到了每个PgConnection的创建路径,现在抓取每个PgConnection的关闭路径,如果一个PgConnection有创建但是没有关闭,那么这个PgConnection就是泄露的连接。

//抓取PgConnection本地端口号和线程栈

watch org.postgresql.jdbc.PgConnection close "{target.getQueryExecutor().pgStream.connection.impl.localport,@org.apache.commons.lang3.exception.ExceptionUtils@getStackTrace(new java.lang.Exception()),throwExp}" -b -n 99999999 > /xxx/xxx/xxx/xxx.txt &

通过分析以上抓取的数据,关闭连接主要有两条路径:

通过业务路径:

at org.postgresql.jdbc.PgConnection.close(PgConnection.java:857)

at com.alibaba.druid.filter.FilterChainImpl.connection_close(FilterChainImpl.java:186)

at com.alibaba.druid.filter.FilterAdapter.connection_close(FilterAdapter.java:777)

at com.alibaba.druid.filter.logging.LogFilter.connection_close(LogFilter.java:481)

at com.alibaba.druid.filter.FilterChainImpl.connection_close(FilterChainImpl.java:181)

at com.alibaba.druid.filter.stat.StatFilter.connection_close(StatFilter.java:294)

at com.alibaba.druid.filter.FilterChainImpl.connection_close(FilterChainImpl.java:181)

at com.alibaba.druid.proxy.jdbc.ConnectionProxyImpl.close(ConnectionProxyImpl.java:114)

at com.alibaba.druid.util.JdbcUtils.close(JdbcUtils.java:85)

at com.alibaba.druid.pool.DruidDataSource.discardConnection(DruidDataSource.java:1546)

at com.alibaba.druid.pool.DruidDataSource.recycle(DruidDataSource.java:2011)

at com.alibaba.druid.pool.DruidPooledConnection.recycle(DruidPooledConnection.java:351)

at com.alibaba.druid.filter.FilterChainImpl.dataSource_recycle(FilterChainImpl.java:5049)

at com.alibaba.druid.filter.logging.LogFilter.dataSource_releaseConnection(LogFilter.java:907)

at com.alibaba.druid.filter.FilterChainImpl.dataSource_recycle(FilterChainImpl.java:5045)

at com.alibaba.druid.filter.stat.StatFilter.dataSource_releaseConnection(StatFilter.java:711)

at com.alibaba.druid.filter.FilterChainImpl.dataSource_recycle(FilterChainImpl.java:5045)

at com.alibaba.druid.pool.DruidPooledConnection.syncClose(DruidPooledConnection.java:324)

at com.alibaba.druid.pool.DruidPooledConnection.close(DruidPooledConnection.java:270)

at org.springframework.jdbc.datasource.DataSourceUtils.doCloseConnection(DataSourceUtils.java:341)

at org.springframework.jdbc.datasource.DataSourceUtils.doReleaseConnection(DataSourceUtils.java:328)

at org.springframework.jdbc.datasource.DataSourceUtils.releaseConnection(DataSourceUtils.java:294)

at org.springframework.jdbc.datasource.DataSourceTransactionManager.doCleanupAfterCompletion(DataSourceTransactionManager.java:329)

at org.springframework.transaction.support.AbstractPlatformTransactionManager.cleanupAfterCompletion(AbstractPlatformTransactionManager.java:1016)

at org.springframework.transaction.support.AbstractPlatformTransactionManager.processCommit(AbstractPlatformTransactionManager.java:811)

at org.springframework.transaction.support.AbstractPlatformTransactionManager.commit(AbstractPlatformTransactionManager.java:730)

at org.springframework.transaction.interceptor.TransactionAspectSupport.commitTransactionAfterReturning(TransactionAspectSupport.java:487)

at org.springframework.transaction.interceptor.TransactionAspectSupport.invokeWithinTransaction(TransactionAspectSupport.java:291)

at org.springframework.transaction.interceptor.TransactionInterceptor.invoke(TransactionInterceptor.java:96)

at org.springframework.aop.framework.ReflectiveMethodInvocation.proceed(ReflectiveMethodInvocation.java:179)

at org.springframework.aop.framework.JdkDynamicAopProxy.invoke(JdkDynamicAopProxy.java:213)

at com.sun.proxy.$Proxy303.xxxxxxx业务方法(Unknown Source)

at sun.reflect.GeneratedMethodAccessor149.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at com.taobao.hsf.remoting.provider.ReflectInvocationHandler.handleRequest0(ReflectInvocationHandler.java:83)

通过druid Destroy线程路径:

at org.postgresql.jdbc.PgConnection.close(PgConnection.java:857)

at com.alibaba.druid.filter.FilterChainImpl.connection_close(FilterChainImpl.java:186)

at com.alibaba.druid.filter.FilterAdapter.connection_close(FilterAdapter.java:777)

at com.alibaba.druid.filter.logging.LogFilter.connection_close(LogFilter.java:481)

at com.alibaba.druid.filter.FilterChainImpl.connection_close(FilterChainImpl.java:181)

at com.alibaba.druid.filter.stat.StatFilter.connection_close(StatFilter.java:294)

at com.alibaba.druid.filter.FilterChainImpl.connection_close(FilterChainImpl.java:181)

at com.alibaba.druid.proxy.jdbc.ConnectionProxyImpl.close(ConnectionProxyImpl.java:114)

at com.alibaba.druid.util.JdbcUtils.close(JdbcUtils.java:85)

at com.alibaba.druid.pool.DruidDataSource.shrink(DruidDataSource.java:3194)

at com.alibaba.druid.pool.DruidDataSource$DestroyTask.run(DruidDataSource.java:2938)

at com.alibaba.druid.pool.DruidDataSource$DestroyConnectionThread.run(DruidDataSource.java:2922)

【抓取到的创建PgConnection的本地端口号】如果没有出现在【抓取的关闭PgConnection的本地端口号】中,那么这个本地端口号对应的连接就是没有被关闭的,通过与netstat查的端口号对比,是一致的,所以猜测没有调用到PgConnection.close或者调用PgConnection.close出现异常了?

连接池到底做没做释放连接的操作?

以下分别从宏观统计数据和单个未关闭连接的角度分析:数据库连接池到底做没做释放连接的操作。

宏观统计数据

druid记录了很多指标数据:

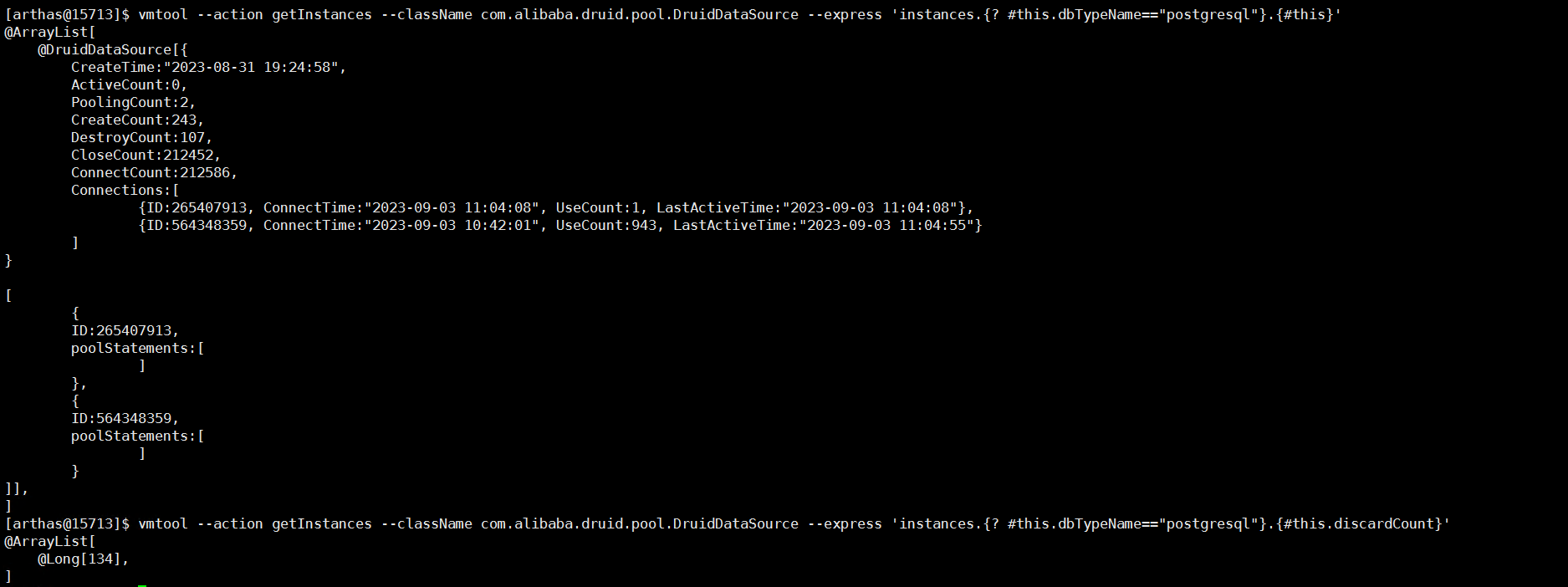

vmtool --action getInstances --className com.alibaba.druid.pool.DruidDataSource --express 'instances.{? #this.dbTypeName=="postgresql"}.{#this}'

vmtool --action getInstances --className com.alibaba.druid.pool.DruidDataSource --express 'instances.{? #this.dbTypeName=="postgresql"}.{#this.discardCount}'

图上几个关键字段说明如下:

| 序号 | 字段 | 说明 |

|---|---|---|

| 1 | ActiveCount | 当前正在被应用程序使用中的连接数量 |

| 2 | PoolingCount | 当前连接池中空闲的连接数量 |

| 3 | CreateCount | 连接池创建过的物理连接的数量 |

| 4 | DestroyCount | 连接池关闭过的物理连接的数量 |

| 5 | ConnectCount | 应用向连接池申请过的逻辑连接的数量 |

| 6 | CloseCount | 应用向连接池释放过的逻辑连接的数量 |

| 7 | discardCount | 关闭过的物理连接的数量,具体逻辑可以查看discardConnection和shrink方法中的逻辑 |

这里我们分析两个数据,分别是:

物理连接创建和关闭

通过对druid逻辑分析,得出公式:

CreateCount = ActiveCount + PoolingCount + DestroyCount + discardCount,

将数据代入以上公式,即:243 = 0 + 2 + 107 + 134。

逻辑连接申请和释放

通过对druid逻辑分析,得出公式:

ConnectCount = ActiveCount + CloseCount + discardCount,

将数据代入以上公式,即:212586 = 0 + 212452 + 134。

通过以上分析,从连接池的角度是已经释放连接了。

单个未关闭连接的角度

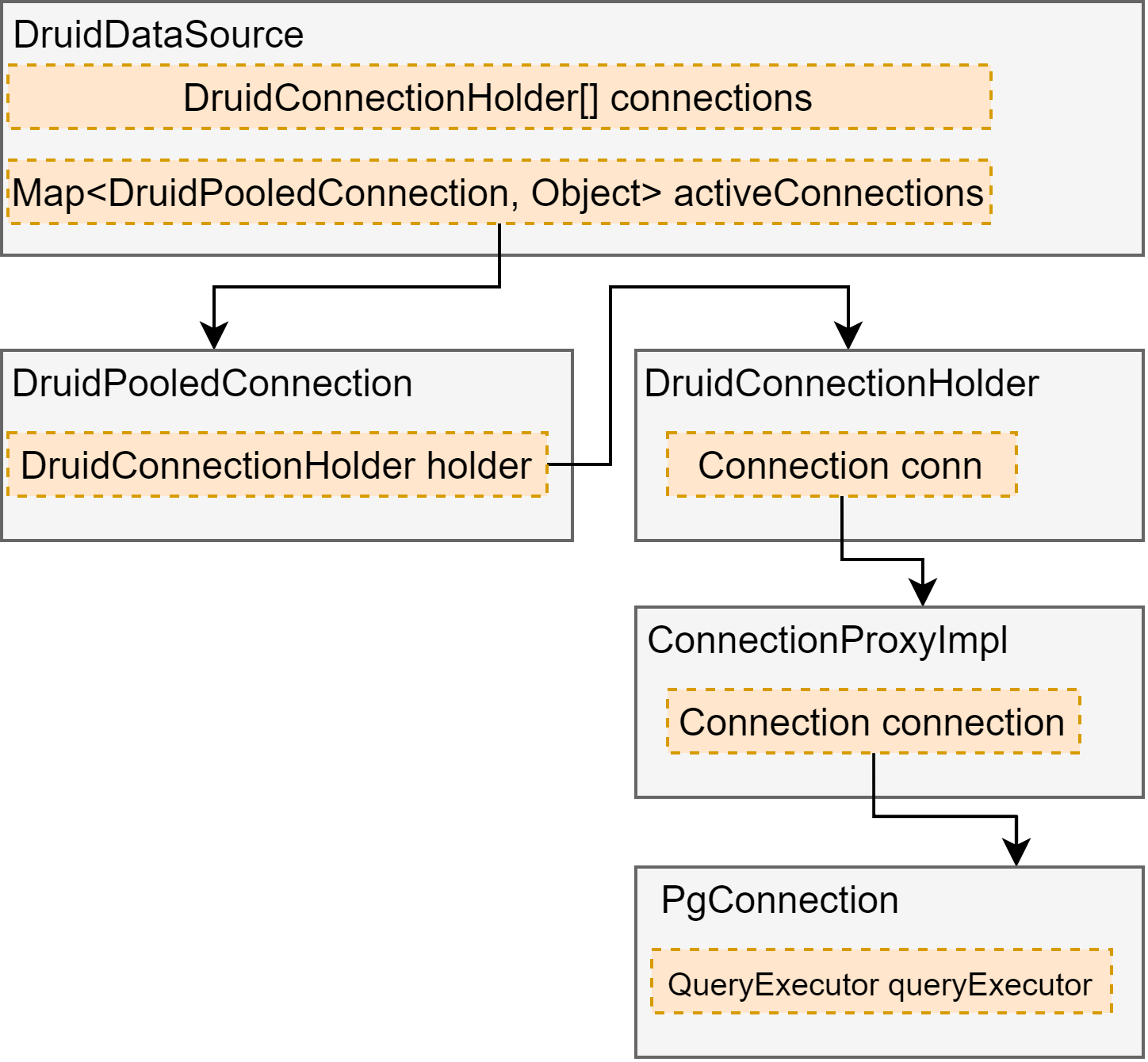

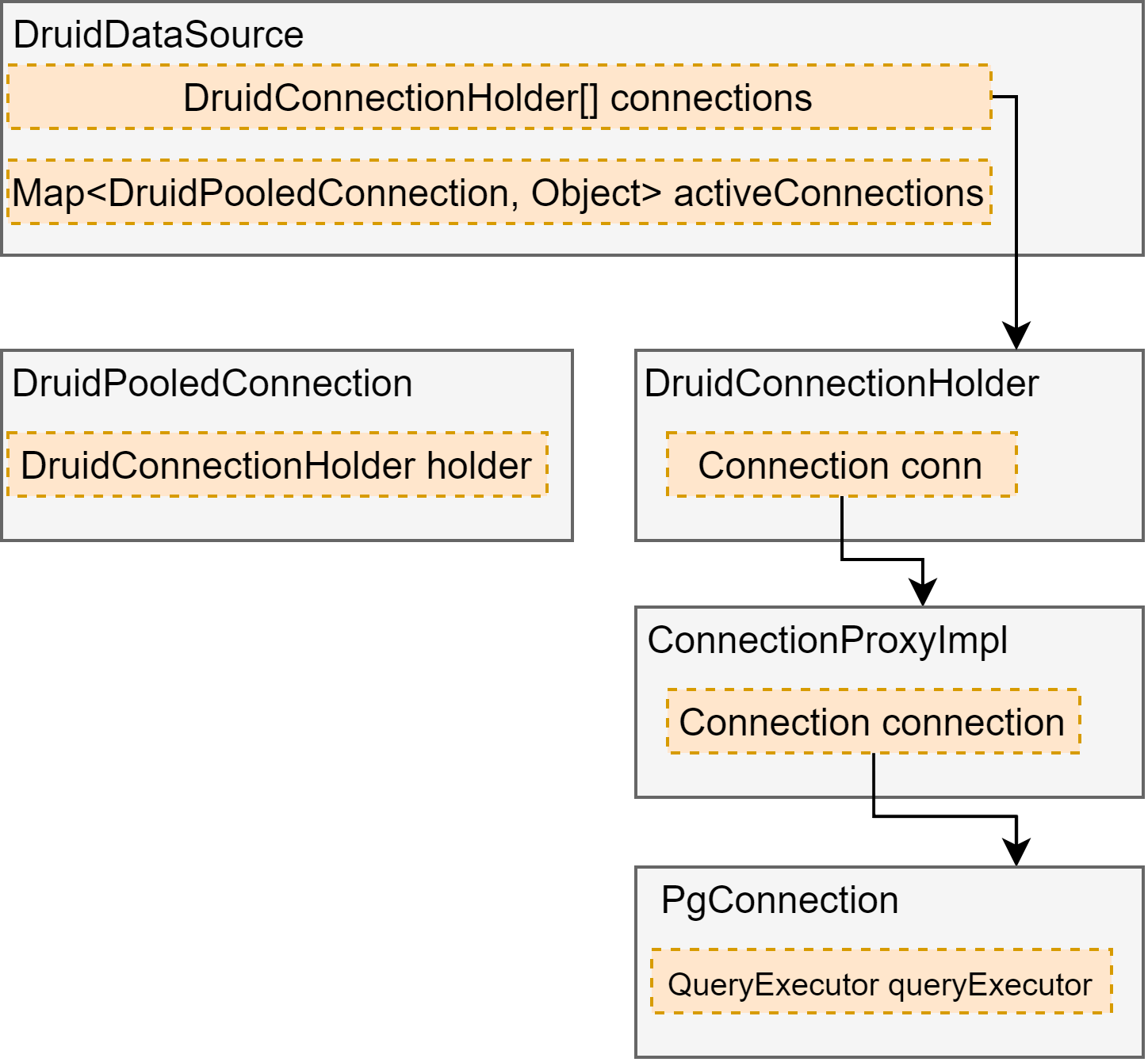

首先需要弄清楚连接池中几个关键对象的关系,当应用向连接池申请连接的时候,连接池返回给应用的是DruidPooledConnection;当应用归还连接到连接池的时候,调用的是DruidPooledConnection.close();某些条件下连接会被关闭。

应用向连接池申请连接

应用向连接池归还连接

归还连接的时候,代码不会走到com.alibaba.druid.util.JdbcUtils.close的逻辑。

关闭连接

如果一个PgConnection被关闭,那么与该PgConnection之前有关联的对象的状态会有变化,下面我们分析下与本地端口号是34802的PgConnection有关系的对象的状态。

DruidPooledConnection

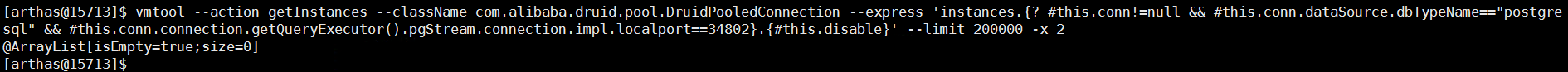

vmtool --action getInstances --className com.alibaba.druid.pool.DruidPooledConnection --express 'instances.{? #this.conn!=null && #this.conn.dataSource.dbTypeName=="postgresql" && #this.conn.connection.getQueryExecutor().pgStream.connection.impl.localport==34802}.{#this.disable}' --limit 200000 -x 2

没有找到对应的对象,说明已经调用了DruidPooledConnection.close(),因为调用close后,会设置该对象的conn为null。

DruidConnectionHolder

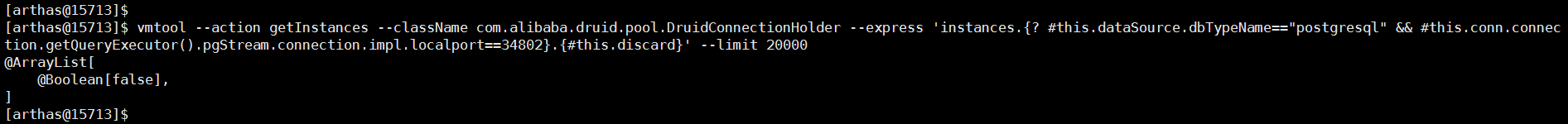

vmtool --action getInstances --className com.alibaba.druid.pool.DruidConnectionHolder --express 'instances.{? #this.dataSource.dbTypeName=="postgresql" && #this.conn.connection.getQueryExecutor().pgStream.connection.impl.localport==34802}.{#this.discard}' --limit 20000

DruidConnectionHolder.discard=false,因为连接的关闭有两条路径:

- 通过DestroyTask.run路径关闭,不会设置DruidConnectionHolder.discard为true,分析发现泄露连接的discard字段值都为false,所以泄露的连接是通过该路径执行的关闭

- 通过DruidPooledConnection.close()路径关闭,会设置DruidConnectionHolder.discard为true

ConnectionProxyImpl

@Override

public void close() throws SQLException {

FilterChainImpl chain = createChain();

chain.connection_close(this);

closeCount++;

recycleFilterChain(chain);

}

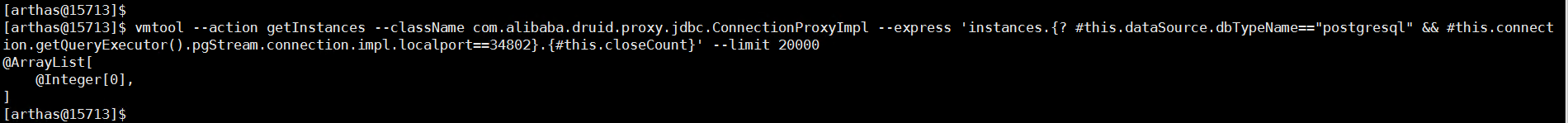

vmtool --action getInstances --className com.alibaba.druid.proxy.jdbc.ConnectionProxyImpl --express 'instances.{? #this.dataSource.dbTypeName=="postgresql" && #this.connection.getQueryExecutor().pgStream.connection.impl.localport==34802}.{#this.closeCount}' --limit 20000

ConnectionProxyImpl.closeCount=0,如果连接池正确关闭的话应该等于1。

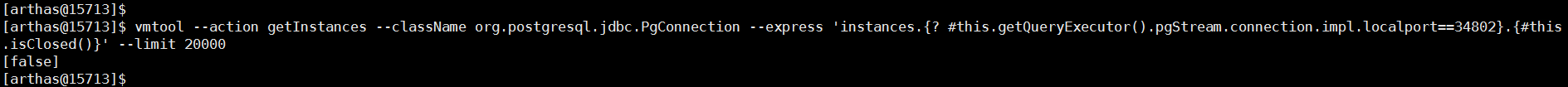

PgConnection

@Override

public void close() throws SQLException {

if (queryExecutor == null) {

return;

}

if (queryExecutor.isClosed()) {

return;

}

releaseTimer();

queryExecutor.close();

openStackTrace = null;

}

// queryExecutor.close()

@Override

public void close() {

if (closed) {

return;

}

try {

LOGGER.log(Level.FINEST, " FE=> Terminate");

sendCloseMessage();

pgStream.flush();

pgStream.close();

} catch (IOException ioe) {

LOGGER.log(Level.FINEST, "Discarding IOException on close:", ioe);

}

closed = true;

}

// pgStream.close()

@Override

public void close() throws IOException {

if (encodingWriter != null) {

encodingWriter.close();

}

pgOutput.close();

pgInput.close();

connection.close();

}

PgConnection.isClosed()=false,如果连接正确关闭的话应该等于true。

继续分析

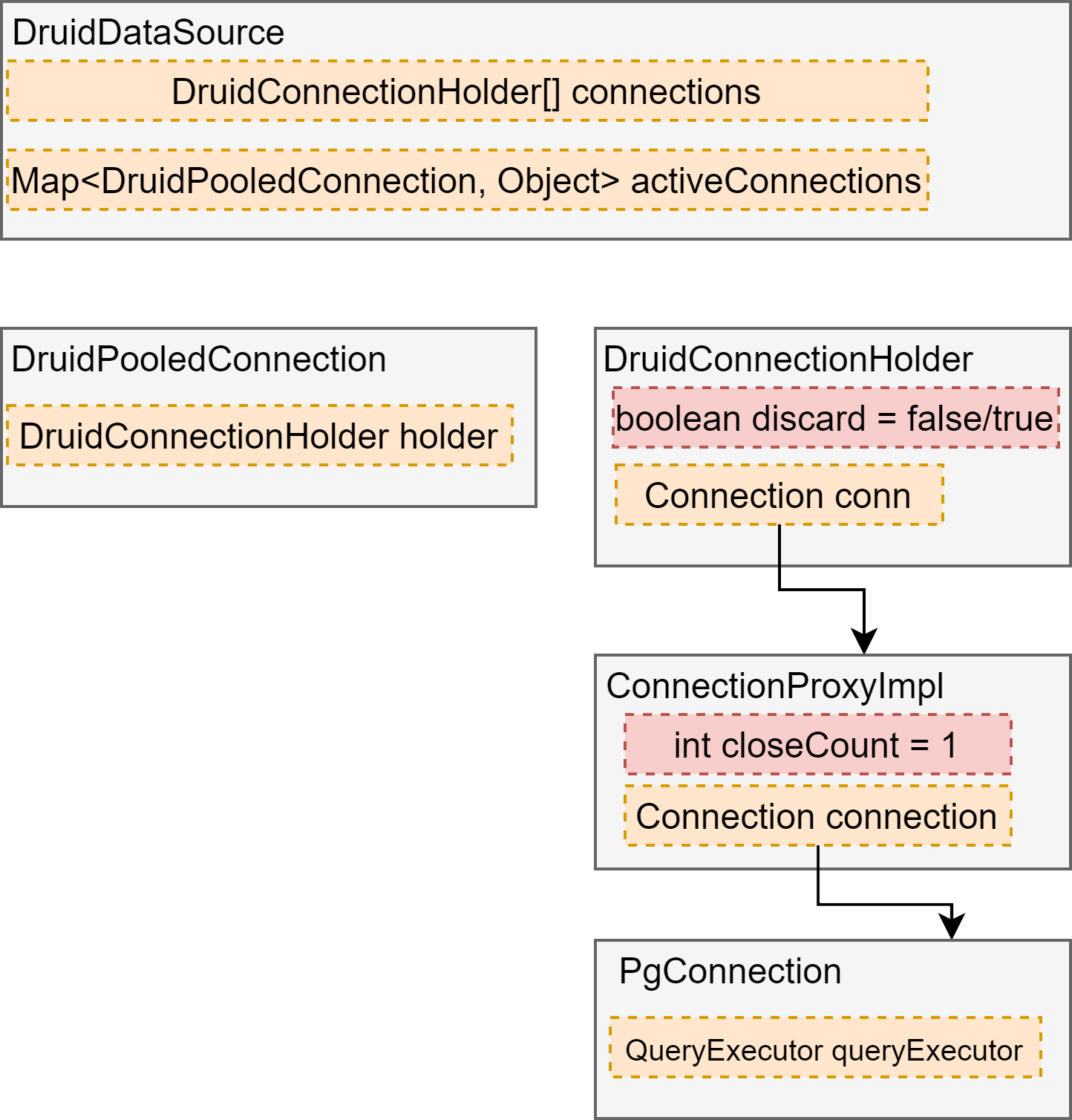

从以上分析可知,泄露连接DruidConnectionHolder.discard都为false,所以发现问题的代码大概率出现在DestroyTask.run()方法中:该方法主要包括shrink方法和removeAbandoned方法,其中removeAbandoned方法已经在前面分析过,下面主要分析下shrink逻辑,shrink逻辑主要包括(我们的配置不涉及keepAlive处理逻辑):

【筛选出evictConnections和keepAliveConnections】、【从connections中去掉evictConnections和keepAliveConnections的连接】、【处理evictConnections和keepAliveConnections】。

连接池删除了连接,但是连接没close掉?

最开始怀疑问题出在【处理evictConnections和keepAliveConnections】,即在关闭连接的时候出现了异常,虽然连接池中删除了该连接,但是close该连接的时候并没有关闭干净,所以通过arthas watch抓取close阶段是否出现异常:

watch com.alibaba.druid.proxy.jdbc.ConnectionProxyImpl close "{target.connection.getQueryExecutor().pgStream.connection.impl.localport,@org.apache.commons.lang3.exception.ExceptionUtils@getStackTrace(new java.lang.Exception())}" 'target.dataSource.dbTypeName=="postgresql"' -e > /xxx/xxx/xxx/error.txt &

结果没有抓到。

另外从PgConnection角度分析,如果PgConnection调用了close方法,那么PgConnection相关联的org.postgresql.core.QueryExecutorCloseAction类的PG_STREAM_UPDATER会变为null,而PG_STREAM_UPDATER并没有变为null。下面是QueryExecutorCloseAction中的方法:

@Override

public void close() throws IOException {

... ...

PGStream pgStream = this.pgStream;

if (pgStream == null || !PG_STREAM_UPDATER.compareAndSet(this, pgStream, null)) {

// The connection has already been closed

return;

}

sendCloseMessage(pgStream);

if (pgStream.isClosed()) {

return;

}

pgStream.flush();

pgStream.close();

}

总上分析,泄露的连接压根就没有调用其close方法。

难道在【从connections中去掉evictConnections和keepAliveConnections的连接】阶段把连接弄丢了?

通过分析代码及翻找druid issues,出问题的代码主要在:

int removeCount = evictCount + keepAliveCount;

if (removeCount > 0) {

System.arraycopy(connections, removeCount, connections, 0, poolingCount - removeCount);

Arrays.fill(connections, poolingCount - removeCount, poolingCount, null);

poolingCount -= removeCount;

}

问题大概是这样子的:

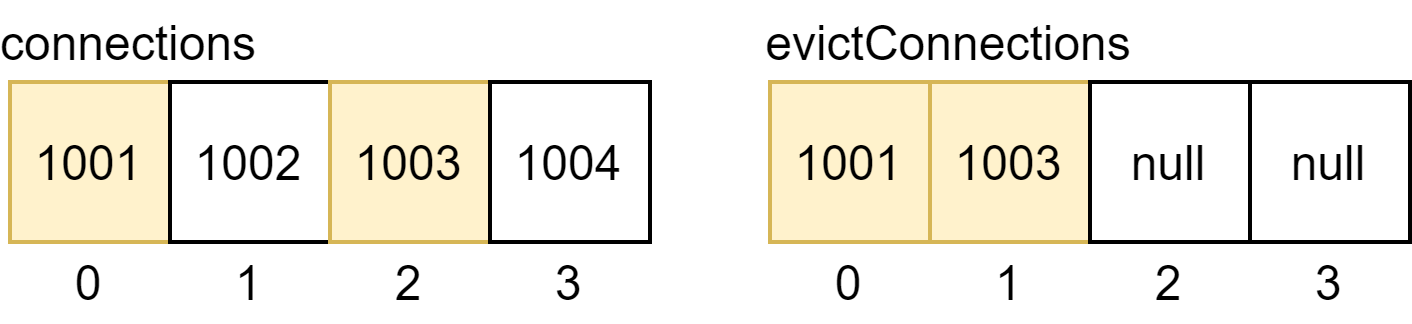

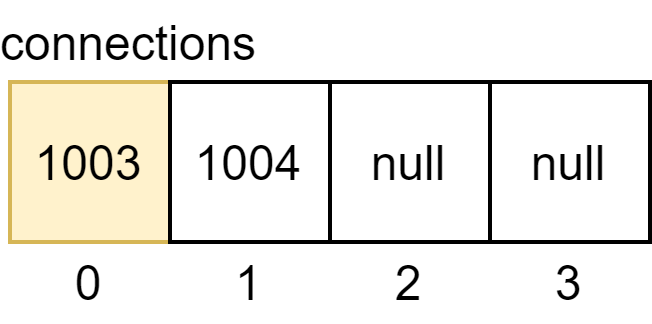

1.假设connections中有四个连接,分别是1001、1002、1003、1004,其中1001、1003被确认是evictConnection

2.去除connections中的evictConnection

从上面图中可以看出,最终把1002连接给弄丢了,所以该连接也不会再调用到close方法,导致了连接泄露。

解决办法

从druid 1.2.18开始对shrink逻辑进行了优化,可以尝试切换到新版本